If you’ve been active on social media over the last few years, you might have picked up on the sentiment that your new phone takes worse photos than your previous one. Whether you’re on Instagram or TikTok, you can easily find tutorials on “how to escape bad camera quality on your iphone” or “how to stop the iPhone auto edit.”

However, ‘quality’ is extremely subjective, so what exactly is ticking people off? A common complaint is that the image preview in your camera app doesn’t exactly match the outcome, but there is a good reason for this and in order to explain it, we need to take a step back into history.

What does it mean for a photo to be not only digital but computed?

Traditionally, photographers used film cameras that had little to no digital input. Light would travel through a lens, momentarily hit a film and it would later be developed manually. Digital cameras changed this by using a sensor instead of film. Light similarly hits the sensor, but instead of the image being chemically and permanently burned, a tiny chip calculates how much light each pixel is exposed to and translates those values to a range of numbers that later create an image.

This is to say that digital cameras have always done some form of “computation”. However, the term “computational photography” became popularized in the mainstream around 2016, largely by the work of Marc Levoy at Google and his work on High Dynamic Range (HDR) algorithms. One of the biggest challenges in photography is accurately capturing images where there is a lot of variation in light levels, and in cameras this is measured by dynamic range, or the range of brightness from the lightest to the darkest parts that a camera can capture in a single shot. Consequently, an HDR image is one that has been processed to have as much dynamic range as possible. While smartphones – and even regular cameras – have been able to take HDR photos for quite a while, Marc and his team developed an algorithm, where from the moment you opened the camera app it began automatically taking photos in the background. Whenever you tapped the shutter button the camera would stop taking photos, take the last few frames and merge them into one “super image”. The results were not just obvious but universally praised as having better detail, lower noise, and, yes, higher dynamic range. At the same time, because this processing was done after the photo was taken, it meant the viewfinder didn’t exactly match the final output and it served as more of an estimation.

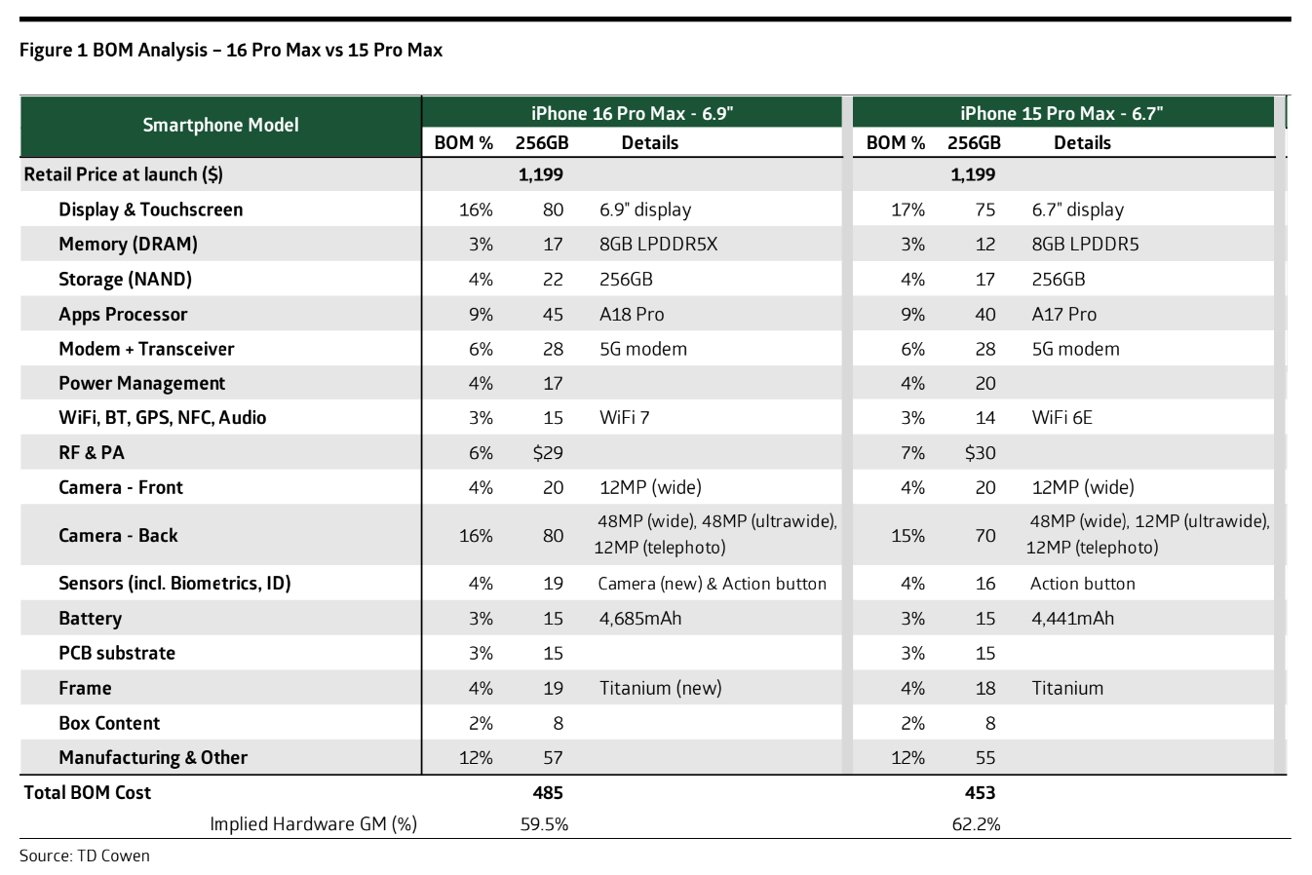

You may ask, why is all this processing needed, when traditional camera manufacturers such as Sony and Canon could achieve better results with less reliance on software. Well, this is because smartphone cameras are relatively cheap. You might scoff at the idea of a device that can cost over a thousand euros being “cheap”, but take into account that the camera is by no means the most expensive component of your phone. According to TD Cowen, the camera sensors on an iPhone 16 makes up less than 20% of the parts cost, and this includes all 3 of the cameras, instead of just the main one.

Comparison between the bill of materials of the iPhone 16 and 15.

(Image from TD Cowen and Apple Insider.)

Contrast this to traditional, single-purpose cameras and lenses that can easily cost upwards of thousands of dollars and you will see that your phone camera sensor is incredibly small, and indeed cheap.

In order to bridge the gap between these traditional and phone cameras, manufacturers have had to resort to tricks such as the previously mentioned HDR merging, night mode, dynamic color temperature settings, etc. But the question remains, why has the sentiment that photos are getting worse risen so suddenly?

Stuck between a rock and a hard place.

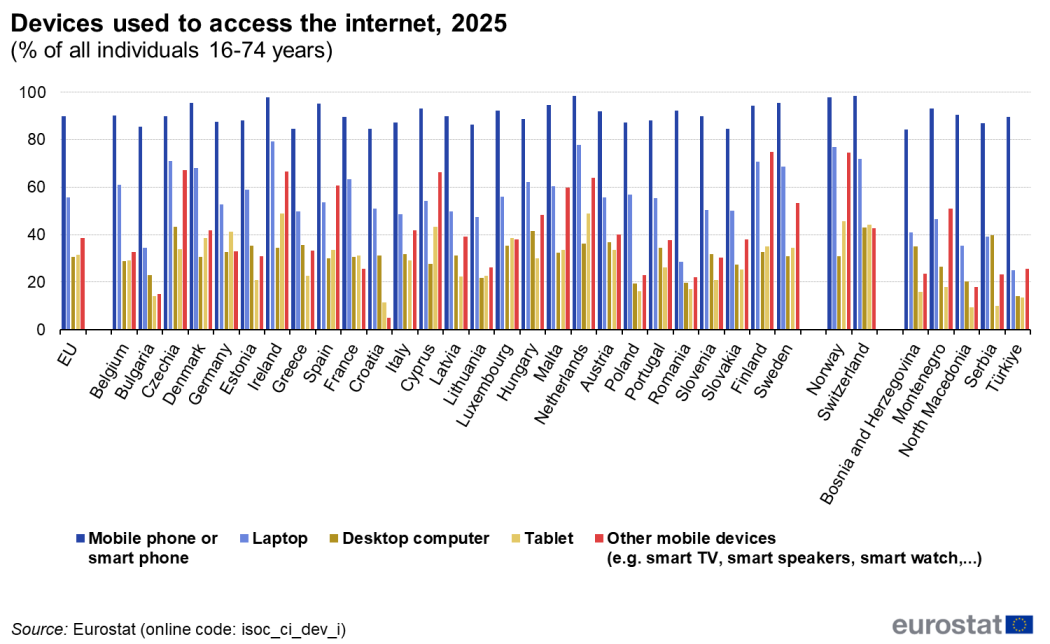

A major factor to consider is the user base. When Sony makes a high-end camera it has an incredibly niche demographic in mind, a group of people that it can trust will learn how to best use the product. When Apple or Google release a smartphone, they have to cater to a group of people spanning cultures, socioeconomic classes and generations – in short, everyone. Smartphones are so prevalent that according to a Eurostat report in many EU countries, nearly 100% of individuals, ages 16-74 use one to access the web.

Chart comparing device types used to access the internet in the EU, grouped by country.

This means, that your phone camera has to be the best camera for a 16 year-old highschooler who is taking pictures of the whiteboard during their lesson, it has to be the best one for your grandparents to capture their vacation, for your parents to take family pictures on the holidays and finally for you to navigate your everyday life. It has to work all the time and any time, even in traditionally challenging situations such as backlit photos, or nighttime shots.

Simply put, it is impossible to educate a user-base so large, thus manufacturers are constrained, having to create a camera that will take the “best” photo even when it is being captured by someone who does not know about the basic rules of photography. This is not necessarily a bad goal, and if anything it serves as a democratic way to create an interest in photography.